Whether you choose to go with private cloud (e.g. Open Stack) in the company's data center or you choose to go with the public cloud (e.g. AWS), or some mix of the two, there are plenty of good tools and documentation resources to stand up the cloud for your organisation. There are very talented professional services that can cut the cloud's time-to-deployment and provide high service level guarantees from day one, so that your organisation's IT workers can learn from the best and have ample time to train up on all things cloud. Several IT components - enterprise servers, networking, storage - are increasingly tailored to the cloud use case with hardware vendors making sure there are drivers and documentation to interface with popular cloud offerings. It seems as if its only getting easier to deploy a private cloud. Public cloud offerings have gone a step further: not only do they provide turnkey infrastructure solutions, but also provide several platform services so you don't have to build these support platforms yourself (think managed databases, CDNs, load balancing, DNS, monitoring, archival storage etc.). In short, the debate about cloud maturity and support is over. Its more mature, better supported and better documented than most DiY-bare metal infrastructure that IT organisations have been supporting until now.

Setting up is the Easy Part

Here is how the cloud story unfolds in a large enterprise: the CIO's strategic initiative boot-straps the cloud of choice and many dollars later a shiny new cloud is born in the IT organisation. IT leaders proclaim the end of the dark ages of waiting months before bare-metal servers could be lit up in DCs. The business unit applications consuming the cloud wait in anticipation of converting their capex IT expenditure into opex. Legions of IT engineers and developers are trained up in the specific cloud technology chosen by the organisation. They all hear the spiel about devops, scalability and micro-services and how to engineer applications to fit into the cloud. The organisation has arrived at the forefront of the IT landscape and there is something for everyone (it seems).

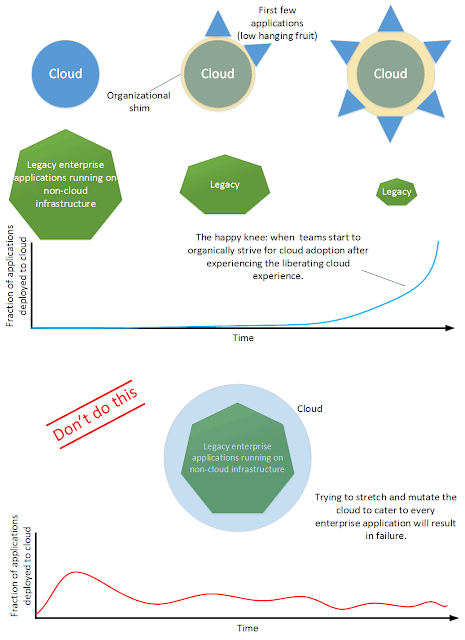

6-12 months later things could have gone two ways. Either there is genuine transformation and notable enterprise applications start migrating to the cloud, or the cloud is deemed a failure with many application owners finding reasons (legitimate or not) to delay, postpone or revisit the decision to move to the cloud. To address these issues the IT organisation is forced to retrofit and re-architect the cloud and to make it more "enterprise friendly". The cloud becomes an albatross hanging around the IT organisation's neck - a few applications have moved to the cloud and therefore it cannot be shelved or re-engineered from scratch. Most applications continue in legacy non-cloud mode - straining IT organisations' resources to maintain both legacy and cloud infrastructure. Application owners feel the push-back from the IT organisation when it comes time to invest more in non-cloud (bare-metal) infrastructure as IT tries desperately to wring the arm of the application owners to "get-on" the cloud instead. Even then, little details - such as the organisation's entrenched database technology not being supported in the cloud - effectively rule out the cloud as an option for most of the enterprise's applications. You can almost hear the deafening noise when cloud-high expectations (pun intended) come crashing down.

There are two potential pitfalls that are the most problematic. I call them the "Big Happy Family Syndrome" and "Everyone is Invited Syndrome".

Big Happy Family Syndrome

The cloud is incredibly flexible. In their eagerness to earn enterprise business, many third-party developers have been offering ways to make the cloud "enterprise friendly". These solutions can irreparably mutate the cloud and make it lose its unique value preposition. They give enterprises excuses for not moving toward cloud-capable application architectures by effectively saying that "Its okay to do things the old way, you can still bask in the glory of declaring you are on the cloud without re-architecting your 15 year old dinosaur application that everyone is so comfortable with". A wolf in sheeps clothing.Here is another example: Proprietary storage company "X" has a large footprint in an enterprise's data centre. The whole storage team is trained in this technology, and is loathe to adopt the new cloud storage technology (from example, in case of Openstack, this may be Ceph) that has been heavily tested and automated by the cloud developers. Proprietary company "X" writes a storage driver to integrate its array with Openstack, but the level of integration, testing and automation is not the same as Ceph. Automation is uncomfortable - one has to deal with things like authentication, quota and capacity management and most importantly, this means giving up control and making the storage team redundant (This is obtuse thinking, in my own experience the cloud creates many more opportunities for IT specialists willing to learn). Under the pretext of "enterprise grade storage to back the cloud", the whole storage piece is retro-fitted and still driven via manual tickets, meaning that any virtual machine that needs storage will need to give up on automated provisioning as it waits (for hours or even days) for the ticket to be actioned. The organisation in this example has just removed one of the pillars of the cloud's value preposition - spinning up infrastructure on demand instantly.

Everyone is Invited Syndome

It is every CIO's dream to move all applications to the cloud. There are valid reasons to want to achieve this but forcing all enterprise IT applications to get on the cloud from day one is a mistake. Many IT managers still believe that the cloud is virtualisation - virtual machines on demand for running applications, and so basically if a suitable VM can be provisioned (in terms of CPU/memory/storage capacity) then any application can be moved to the cloud. In reality moving to the cloud goes far beyond running an application in a VM but unfortunately, this is all that can be achieved when applications are shoe-horned into the cloud when under an unreal time-to-cloud constraint.The reality is that many enterprise applications need to be re-architected and perhaps even rebuilt (with more cloud-friendly constructs) before they become cloud citizens. Yes it is possible to run virtually any application in the cloud but by forcing the IT organisation to support each unique application deployment on the cloud you are setting up for failure. IT teams are not nearly as scalable as cloud infrastructure - the technical debt of creating one-off VMs in the cloud will quickly overwhelm them and lead to missed configurations, broken VMs and catastrophic failures. Moreover, clouds are designed with the assumption that service availability is distributed across several hyper-visors. Every time a legacy "VIP" application is installed on a VM with no distributed capabilities it limits the infrastructure team from booting that hyper-visor when its OS needs to be patched, for example. In general, introducing exceptions and a class system in the application population takes out infrastructure agility from the cloud value preposition.

Which Way then?

The happy reality is that most application owners "get it" about why they need to be on the cloud. They are genuinely interested in modernising their architecture but may not have the resources or time to do so in sync with a cloud roll-out. Many CotS (commercial off-the-shelf) applications are also being (slowly) re-written and re-architected to become more cloud friendly. Moreover, enterprise SaaS application (For example, remotely-hosted Workday) are making inroads into enterprises and displacing monolithic legacy applications as time goes by. Hopefully some of the problematic application workloads will get outsourced to software services hosted off-premise.

- Start small and grow organically. Don't be mislead by capacity plans that assume that everything will move to the cloud in the next 12 months. Let the cloud grow organically - its one of the key strengths of infrastructure as a service. Don't worry if initial adoption is slow or if the ramp up seems to be taking too long (the more heterogeneous the application mix, the more time this will take - the first initial cloud automation process is slow). There comes an inflection point when application owners see their peers enjoying the benefits of automation, self healing and elastic capacity and there is a mass conversion to the cloud. Peer-pressure is an incredible motivator for application owners to move to the cloud.

- Focus on the low hanging fruit first. Applications like the stateless web-tier or the dev/test environment for a few development teams. Facilitate their move to the cloud and use them as the poster-child to make other application owners drool over what the cloud can do for them if they only re-architected their application. It is usually easier to work with new applications rather than migrating older ones, so in the beginning keep an eye out for new services being developed and deployed by the enterprise. For example, the organisation may be working on exposing a HTTP-based Restful API to its customers and given the need to scale with customer demand, this is a great citizen for the cloud.

- Protect the cloud way. Make sure you do not dilute the cloud's value preposition by bending/mutating to every demand an application owner makes. Circle your wagons around the cloud architecture team and let them have the final say when it comes to change requests for key cloud functionality and components. Its perfectly fine to build an organisational shim around vanilla cloud installations (e.g. custom authentication or monitoring etc.) but do not transplant legacy technology into the cloud - keep the shim slim. Keeping the cloud close to the original also helps roll out upgrades and patches released by the cloud developer community. Enterprises get the additional benefit of forcing legacy dinosaurs to rethink their applications for the future rather than desperately clinging to the past. After all, applications have life cycles too and you don't want to be burdened by the past forever in the future. The greatest risk of all is getting stuck with ageing applications that few employees understand or those that are supported by a single vendor: the anti-thesis of future-proofing enterprise IT.